The AI Function

KI Absicherung is developing and investigating methods and measures for safeguarding AI-based perception functions in highly automated driving. An exemplary stringent safety argumentation will be established to principally safeguard AI-based functions.

To this end, methods and metrics are being developed to provide performance and safety measures that can support this safety argumentation for the general safeguarding of an AI function.

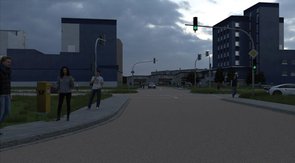

This argumentation is being established in KI Absicherung using the example of pedestrian detection due to its safety relevance. Existing algorithms for pedestrian detection will be tested and evaluated as regards their detection performance and safeguarding capability on the basis of defined quality metrics, which are then used to derive a stringent safety argumentation.